DataOps.live Develop

What is the DataOps development environment?

The DataOps development environment is a ready-to-code environment that follows the basic principles of continuous development. It gives you highly optimized data development experience for key DataOps use cases with no or minimal setup, depending on which way you deploy: locally or in the cloud.

See the deployment models for more information.

As a developer, you may have experienced how annoying it could be to:

- Figure out what tools to install and how to configure them correctly during onboarding on a new project

- Manually set up the development environment every time you need to start fresh and get a clean slate

- Adjust parts of the development environment when you need to switch between different versions of a project

- Manually clean up afterward in order not to pollute the local system with any checkouts, dependencies, builds, databases, and the like

- Constantly keep the correct versions of 10's or 100's libraries

The DataOps development environment changes the above development workflow to remove friction and improve your experience with automation and collaboration benefits. It solves three significant challenges:

- Eliminate all the work and hassle of creating and updating local development environments

- Allow developers to make changes and test those in seconds, not minutes or hours

- Enable developers to develop in an environment virtually identical to real pipelines where the code will finally run - eliminating the common 'well it worked on my laptop' issue!

The DataOps development environment does not support Snowflake Private Link yet, but it's on our roadmap. Reach out to our Support team for more information.

Deployment models

You can use the DataOps development environment with two deployment topologies: browser-based or on your desktop. With any of these deployment models, the DataOps development environment helps you speed up the development process. It allows you to automatically assemble all the resources you need to create a more robust and flexible ecosystem.

Depending on your needs, you can choose between:

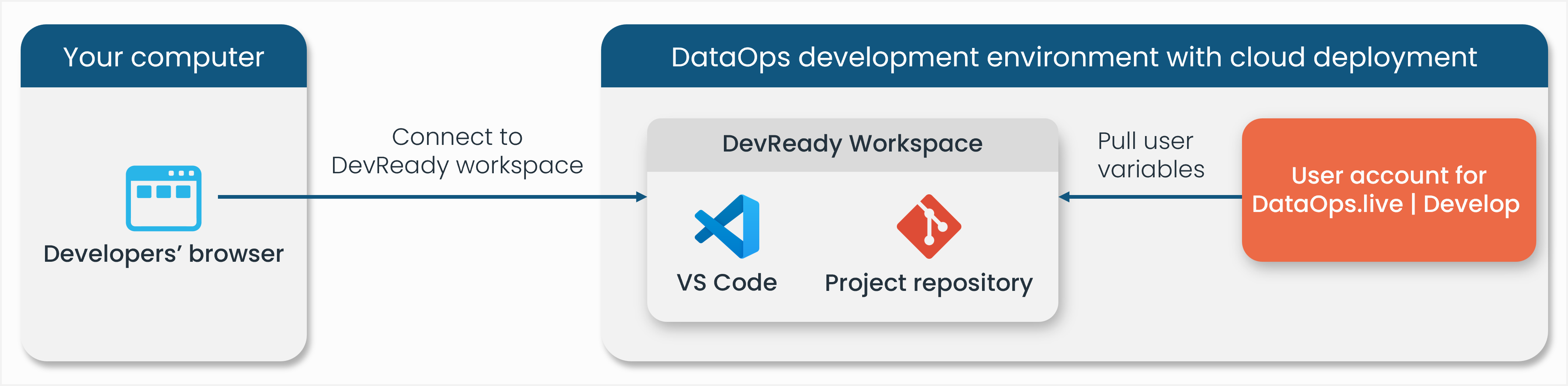

- DevReady: You only need your internet browser to develop. The rest is managed inside DevReady.

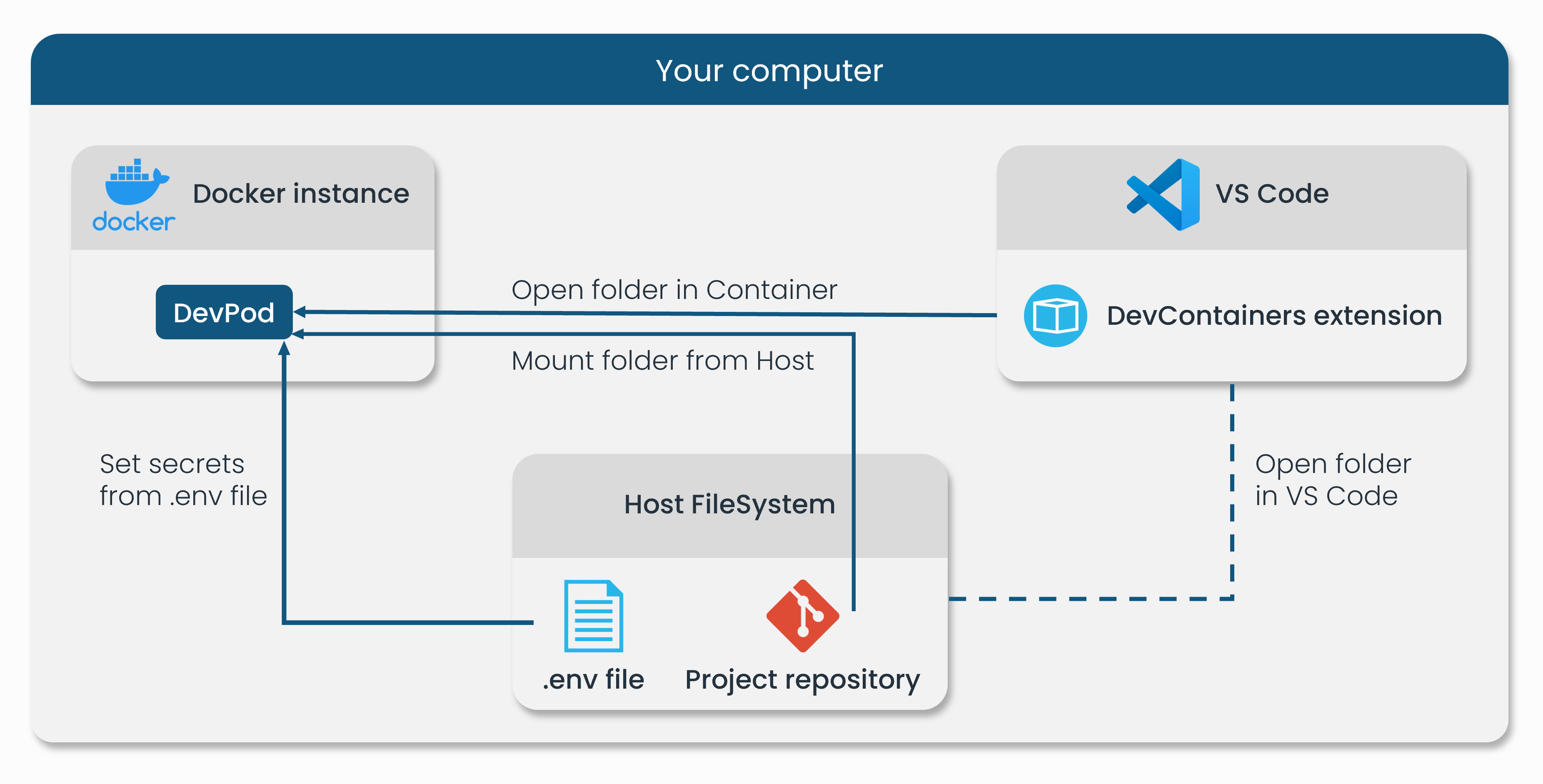

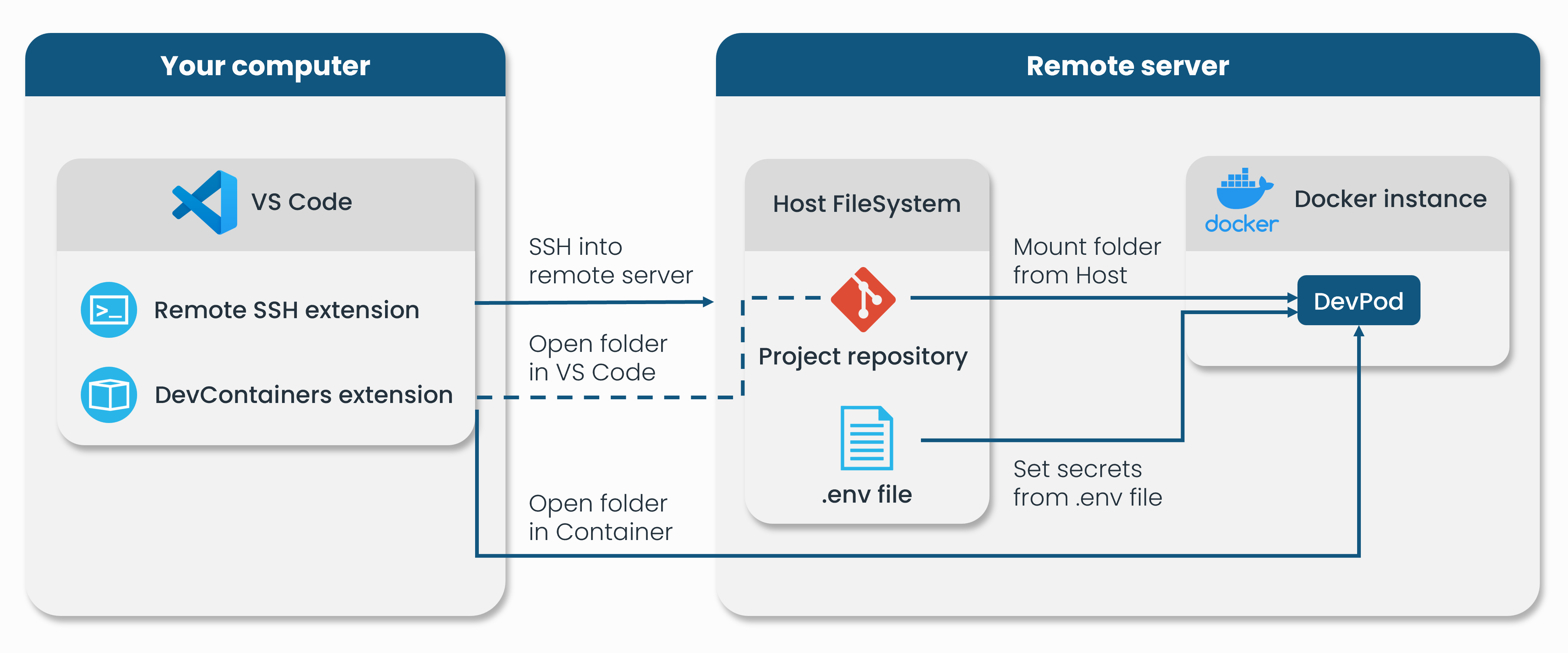

- DevPod: You only need your computer to develop. DevPod uses Dev Containers to provide the same suite of tools available in DevReady. You can deploy the Dev Containers on your local machine or a remote server.

Here are diagrams to represent each scenario:

DevReady

DevReady meets the needs of a wide range of your use cases. It streamlines the development process and provides you with the flexibility and scalability of cloud computing resources. This browser-based development environment incorporates Single Sign-On capabilities to streamline and secure user access across Snowflake accounts. For more information, see the Secure user access to Snowflake documentation.

You can use DevReady with Visual Studio (VS) Code that supports full-featured Git integration. However, you must add our static IP addresses to your allowlist. For more information, see the DataOps.live static IP addresses.

DevPod

Sometimes, organizations prefer to work with local development environments for data sensitivity and security concerns, for example. Depending on your Snowflake structure, and if you want to avoid keeping sensitive information on the cloud or allowing DevReady to connect to Snowflake, you can use DevPod. For more information on installation and setup, see DevPod.

DevPod leverages Dev Containers and provides two deployment choices. First, a fully local deployment:

Second, a deployment with VS Code on your local machine while having the Docker-based Dev Container on a remote computer.

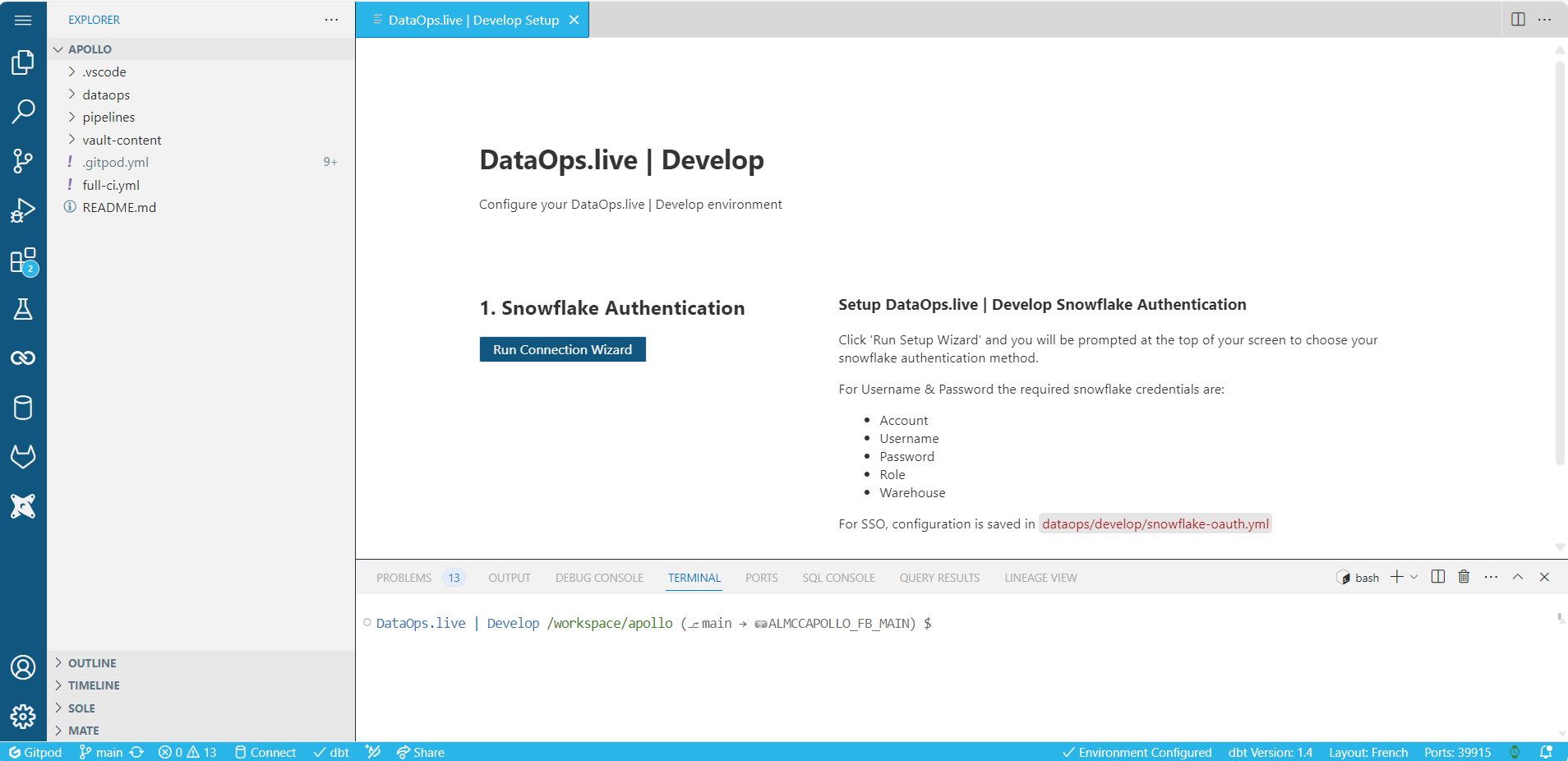

Using the DataOps development environment

To see all aspects of the DataOps development environment and what problems you can solve using any of its deployment models, check out the below topics that describe how to set it up, give some key DataOps use cases, and detail the tools included in it: