How to Create Fallback Jobs

What are fallback jobs

A fallback job acts as a retry mechanism. It attempts your main job again with a different container image or alternative configurational changes.

You can create a fallback job for specific jobs. If the primary job fails, the fallback job steps in and tries to finish successfully.

Why do you need them

By incorporating fallback jobs into a pipeline, developers and data engineers can enhance the reliability, robustness, and fault tolerance of the system. They provide a safety net to ensure that critical processes can continue running and potentially recover from failures.

You might need them if you want to use a feature part of our latest orchestrators release. When you incorporate a fallback job using a stable release, you can improve the pipeline's reliability.

How to implement them

-

Specify a reusable configuration for both jobs.

-

Create the main job that extends from the common config.

- It must use the

allow_failure: trueflag. - At the end of a successful run, the job creates a file with some hard-coded content:

echo "success" > "$CI_JOB_NAME" - It must artifact the file.

- It must use the

-

Create the second job that extends from the common config.

- It must add the appropriate needs.

- It must check whether the file created upon the success of the main job is there or not.

- Override the

imageproperty or any other property of interest.

Example

The pipeline configuration:

include:

- /pipelines/includes/bootstrap.yml

## Load Secrets job

- project: reference-template-projects/dataops-template/dataops-reference

ref: 5-stable

file: /pipelines/includes/default/load_secrets.yml

.setup:

extends: .agent_tag

variables:

LIFECYCLE_ACTION: AGGREGATE

ARTIFACT_DIRECTORY: $CI_PROJECT_DIR/snowflake-artifacts

CONFIGURATION_DIR: $CI_PROJECT_DIR/dataops/snowflake

resource_group: $CI_JOB_NAME

stage: Snowflake Setup

script:

- /dataops

- echo "success" > "$CI_JOB_NAME"

artifacts:

when: always

paths:

- $ARTIFACT_DIRECTORY

- "$CI_JOB_NAME"

icon: ${SNOWFLAKEOBJECTLIFECYCLE_ICON}

Set Up Snowflake:

extends:

- .setup

image: dataopslive/dataops-snowflakeobjectlifecycle-runner:5-latest

allow_failure: true

Set Up Snowflake fallback:

extends:

- .setup

needs: ["Initialise Reference Project", "Set Up Snowflake"]

image: dataopslive/dataops-snowflakeobjectlifecycle-runner:5-stable

script:

- if [ "$(cat 'Set Up Snowflake')" != "success" ]; then /dataops ; fi

dummy:

extends: .agent_tag

stage: Additional Configuration

image: alpine

needs: ["Set Up Snowflake fallback"]

script:

- echo "dummy sample job sucsess."

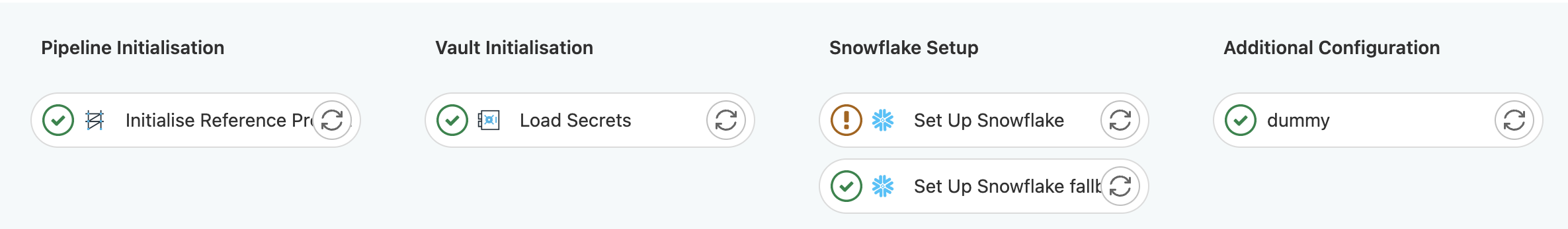

Successful execution of the main job:

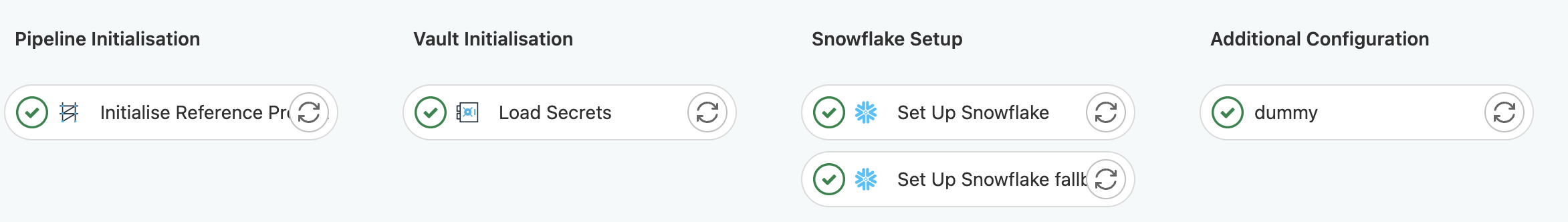

Failure of the main job and recovery through the fallback job: