How to Pass Variables from a Pipeline to MATE

Passing variables to MATE through a DataOps pipeline is particularly useful when using the same pipeline for different operations instead of creating duplicate pipeline files. There are two standard ways a user can define a MATE variable: through the dbt_project.yml or by passing a --vars argument in addition to the TRANSFORM_ACTION. In this example, you will learn how to do the latter with the variable start_date.

Setting up the pipeline job

To dynamically change the variables without needing to change the pipeline, you can pass them via the TRANSFORM_EXTRA_PARAMS_AFTER parameter to the MATE orchestrator. For example:

Build all Models:

extends:

- .modelling_and_transformation_base

- .agent_tag

variables:

TRANSFORM_ACTION: RUN

TRANSFORM_EXTRA_PARAMS_AFTER: '--vars {"start_date":${start_date}}'

stage: Data Transformation

script:

- /dataops

- echo $start_date

icon: ${TRANSFORM_ICON}

You specify --vars by using a simple dictionary in which the value of the key-value pair is a dynamic pipeline variable. Not that the value of start_date gets emitted to the log explicitly.

Setting up the MATE model

For the example, you will use the passed variable in a mart model:

{{ config(alias='ORDERS') -}}

SELECT o_orderkey AS "Order Key",

o_custkey AS "Customer Key",

o_orderstatus AS "Order Status",

o_totalprice AS "Total Price",

o_orderdate AS "Order date",

o_orderpriority AS "Order Priority",

o_clerk AS "Clerk",

o_shippriority AS "Ship Priority",

o_comment AS "Comment",

(SELECT Count(*)

FROM {{ source('snowflake_sample_data_tpch_sf1', 'LINEITEM') }}

WHERE l_orderkey = o_orderkey

AND o_orderdate > Date({{var("start_date") }})) AS "Number of Line Items"

FROM {{ source('snowflake_sample_data_tpch_sf1', 'ORDERS') }}

Running the pipeline

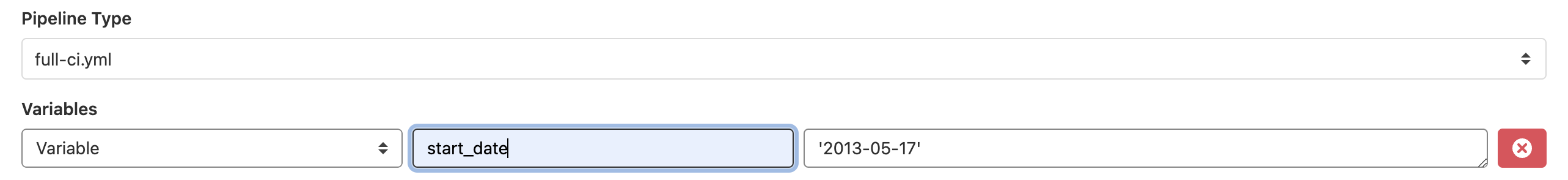

Once you set it up, you can run the pipeline from the project's pipeline view and specify the start_date as a one-off override:

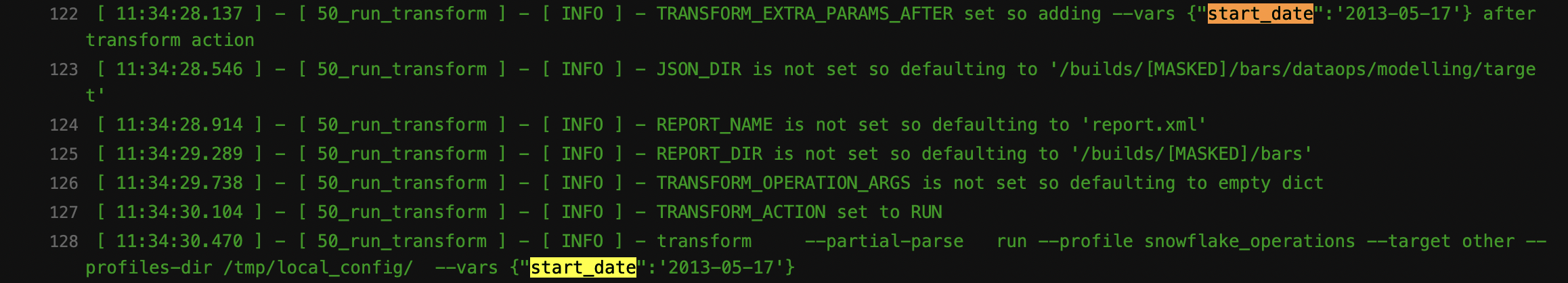

Observing the outcome in the pipeline log files, you can monitor the run and inspect the variables as well:

and