SOLE Core Concepts

It is important to understand the core concepts of Snowflake Object Life Engine to use it effectively. Therefore, let's start with the concepts of lifecycle management and then move on to the following topics:

Lifecycle management

This section on lifecycle management looks at the following subjects:

- What is Lifecycle Management?

- The Lifecycle of an Object

- SOLE Actions

- SOLE Object Groups and Hierarchies

What is lifecycle management?

In summary, lifecycle management (LCM) is the overall administration of objects from conception through to end-of-life destruction. The Life Cycle Initiative describes LCM as follows:

LCM is a business management approach that can be used by all types of businesses to improve their sustainability performance... Its purpose is to ensure more sustainable value chain management... and is used to target, organize, analyze, and manage products... towards continuous improvement along the product lifecycle.

Read "Snowflake object" where this definition uses the terms "value chain" and "product."

Therefore, in the DataOps context, lifecycle management ensures that Snowflake objects are created, managed, and destroyed as needed, making sure that objects are not incorrectly and unnecessarily created, updated, or deleted.

Lifecycle of an object

Now that we understand lifecycle management, let's look at the lifecycle of a Snowflake object, as described by the following image:

Even before the object is created, the first step is analyzing the data to be ingested into Snowflake through DataOps. Typically, an object is created with a particular use in mind. For instance, a product type table (raw.product_types) is created to hold all an organization's product types that it manufactures or sells. The Build MATE Models doc shows how this base table is used as a foundation for the model, stg_product_types. Therefore, this raw.product_types table is an object within the Snowflake ecosystem and has a lifecycle.

Here is an example of the Snowflake object lifecycle:

1. Create object

The basic CREATE TABLE syntax for this table is as follows:

CREATE TABLE raw.product_types

(

prod_type_id INTEGER DEFAULT id_seq.nextval,

prod_type_code VARCHAR(6),

prod_type_desc VARCHAR(100)

)

2. Update/Maintain object

This table structure works for a while and then the structure must change to include additional fields (described in the following ALTER TABLE statement):

ALTER TABLE raw.product_types

ADD COLUMN active BOOLEAN DEFAULT true

3. Delete object

Let's assume that this table is no longer required or is deprecated. Therefore, the following DROP TABLE statement is used to delete the table from the database:

DROP TABLE raw.product_types

As we can see from these code snippets, this object lifecycle management process is manual. This isn't sustainable in the short, medium, or long term as described in the SOLE Fundamentals, Why SOLE guide. As a solution, SOLE takes a declarative approach to sustainable Snowflake object lifecycle management.

SOLE actions

As described above, SOLE manages the lifecycle of Snowflake Objects.

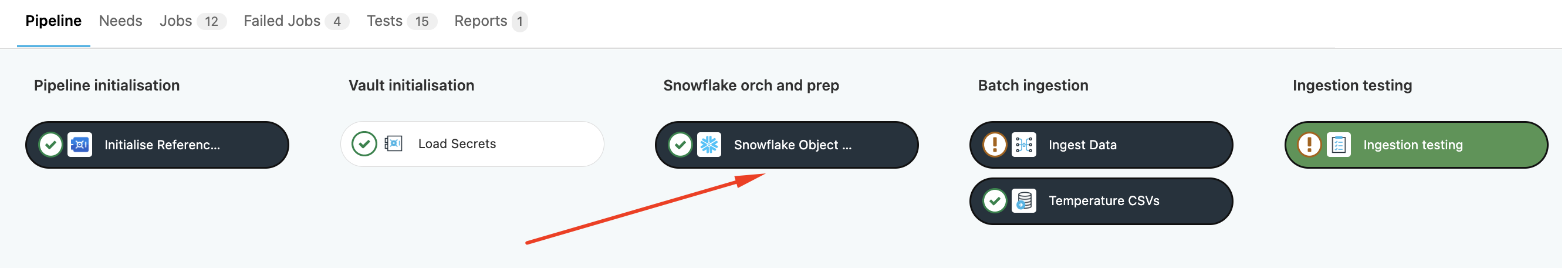

SOLE runs in the initial stages of the DataOps pipeline, as the following image demonstrates.

When SOLE runs in the pipeline, it compiles these configurations to work out object dependencies and resolves the environment and namespace to manage the specified objects effectively.

For instance, the following YAML configuration is a declarative description of the raw.product_types table described above:

databases:

RAW_DATABASE:

schemas:

RAW:

tables:

PRODUCT_TYPES:

columns:

PROD_TYPE_ID:

type: INTEGER

default: id_seq.nextval

PROD_TYPE_CODE:

type: VARCHAR(6)

PROD_TYPE_DESC:

type: VARCHAR(100)

comment: "Product Types Information"

table_grants:

UPDATE:

- STOCK_ROLE

- ACCOUNTADMIN

SOLE takes this file and looks at it together with all the other SOLE objects, including the roles and grants. This operation can be divided into two distinct actions:

The orchestration of the actions is controlled by the Snowflake Object Lifecycle (SOLE) Orchestrator. The orchestrator performs the specified action on the specified object groups.

Compilation

This action handles reading the user-specified configuration describing their Snowflake infrastructure and generates a machine-readable configuration that SOLE can execute to enact the specifications.

SOLE collects all the configuration files, works out the namespacing objects per environment, and resolves dependencies within objects. Upon successful compilation, resource definition and import files are generated and saved in object group-specific directories.

Once this stage is complete, the execution phase is triggered to manage the Snowflake objects within the Snowflake infrastructure.

Execution

Inspecting Snowflake to get the state of objects and applying changes if required is categorized as the execution phase of the Snowflake Object Lifecycle Engine.

Inspection collects the current state of the specified Snowflake object and saves it into the local state file. This local state is then used to plan the changes required to get the object to match the user configuration.

The following lifecycle actions are part of the execution phase:

- VALIDATE

- PLAN

- APPLY

- PLAN-DESTROY

- DESTROY

- AGGREGATE

- AGGREGATE-DESTROY

Object groups and hierarchies

SOLE divides Snowflake objects into four object groups:

ACCOUNT_LEVELDATABASEDATABASE_LEVELGRANT

The execute action is performed on each object group in the order given above.

If you want to control the order explicitly you can override it, using the following DataOps pipeline as the baseline. It shows the ACCOUNT_LEVEL group is executed before the DATABASE, DATABASE_LEVEL, and the GRANT groups.

Apply Account-Level:

extends:

- .snowflakelifecycle_apply_base

needs:

- job: Plan Account-Level

variables:

LIFECYCLE_MANAGE_OBJECT: ACCOUNT_LEVEL

stage: "Apply Account Objects"

Apply Databases:

extends:

- .snowflakelifecycle_apply_base

needs:

- job: Plan Databases

variables:

LIFECYCLE_MANAGE_OBJECT: DATABASE

stage: "Apply Account Objects"

Apply Database-Level:

extends:

- .snowflakelifecycle_apply_base

needs:

- job: Plan Databases

variables:

LIFECYCLE_MANAGE_OBJECT: DATABASE_LEVEL

stage: "Apply Database Objects"

Apply Snowflake Grants:

extends:

- .snowflakelifecycle_apply_base

variables:

LIFECYCLE_MANAGE_OBJECT: GRANT

stage: "Apply Objects Grants"

Configuration

The Snowflake Object Lifecycle Engine supports defining multiple files for the Snowflake object configuration. In other words, you can define your entire Snowflake configuration, subdivided into different YAML files, as a human-readable, declarative format.

The YAML configuration files use the following reserved keywords to define the Snowflake object definitions:

| Keyword | Description | Type |

|---|---|---|

account | Defines the Account configuration | Map/Dictionary |

databases | Defines the Databases configuration | Map/Dictionary |

resource_monitors | Defines the Resource Monitors configuration | Map/Dictionary |

roles | Defines the Roles configuration | Map/Dictionary |

shares | Defines the Shares configuration | Map/Dictionary |

users | Defines the Users configuration | Map/Dictionary |

warehouses | Defines the Warehouses configuration | Map/Dictionary |

include | Defines a list of files to be included by SOLE | List |

specified_roles | Defines a list of roles upon which SOLE grant management can act | List |

specified_users | Defines a list of users upon which SOLE grant management can act | List |

Snowflake object definition files are stored in the directory specified by the CONFIGURATION_DIR parameter. This directory can contain one or more files with either the .yml or .yaml extensions.

You can specify all Snowflake objects in a single file. However, for better maintainability, divide them into separate files, logically grouping objects, such as all roles in one file and all users in another. SOLE will merge the object definitions from the individual files into a single declarative configuration.

The following code snippet demonstrates how to describe all objects in a single file:

databases:

DATAOPS_DATABASE:

schemas:

STAGING: ...

warehouses:

INGESTION: ...

If multiple files contain definitions of the same Snowflake object, the engine only uses the configuration from the last file; the earlier configurations are overridden.

In YAML, SOLE expects configuration keys to always be of string type. In the case of other types, SOLE will fail during compile time.

Users can specify other types in quotes thereby casting it to string.

For example, below will be considered a valid configuration

<configuration-key>:

"123": "<value>"

"True": "<value>"

"false": "<value>"

"1": "<value>"

"0": "<value>"

"null": "<value>"

whereas below will be considered an invalid configuration

<configuration-key>:

123: "<value>"

True: "<value>"

false: "<value>"

1: "<value>"

0: "<value>"

null: "<value>"

Like DataOps pipeline files, SOLE supports including other configuration files from different directories by using the include keyword and passing a list of files. The following example shows how to use the include keyword by passing multiple configuration files stored in different directories:

include:

- "/snowflake/ingest/database.yml"

- "/snowflake/sharing/database.yml"

Snowflake object names specified in the configuration are case-insensitive. In other words,warehouse_1 is converted to WAREHOUSE_1.

If such objects are referenced as parameters in another object, the name must be upper-case. Otherwise, the SOLE compiler will assume that it is a non-managed object.

Object identifiers: Special character support

The Snowflake documentation describes object identifiers as:

A string of characters (up to 255 characters in length) used to identify first-class Snowflake "named" objects, including table columns.

Several rules apply when creating object identifiers, also known as object names. By default, SOLE enforces the following Snowflake identifier rules on object names:

- The object name is converted to uppercase

- An object name cannot start with a number

- Only alphanumeric (A-Z and 0-9) characters and underscore (_) are supported in the object name

Both Snowflake and SOLE offer special character and case-sensitive identifier support, although SOLE currently doesn't support the following special characters as object identifiers, including table columns:

"'\|

On the other hand, it is vital to be aware that neither Snowflake nor SOLE currently supports identifiers with special characters for the following objects:

- Function

- External Function

- Procedure

Special character and case-sensitive identifier support must be enabled in SOLE by setting the parameter DATAOPS_PRESERVE_OBJECT_NAME. When this parameter is set, SOLE uses the object names defined in the SOLE configuration as is, without any modification.

If an object name is configured in lower-case, then the case is preserved. This means that object names object_1, OBJECT_1, and Object_1 would create three different objects and have to be referenced and used.

As highlighted above, Snowflake converts object names to uppercase by default. To use case-sensitive object names, the names must be enclosed in double quotes (").

For instance, the following SQL statement does not convert the database, schema, and table name to uppercase because each object identifier is enclosed in double quotes.

SELECT * FROM "database_1"."Schema_2"."Table-3"

On the other hand, the following SQL statement will return an error because double quotes do not enclose Table-3, and the - is not supported by Snowflake or SOLE as a valid character in the object identifier.

SELECT * FROM database_1.Schema_2.Table-3

Lastly, the following SQL statement converts the object names to uppercase, so each identifier is interpreted as DATABASE_1, SCHEMA_2, and TABLE_3.

SELECT * FROM database_1.Schema_2.Table_3

If relying on SOLE functionality to convert the object name to uppercase (database_1 -> DATABASE_1), enabling the DATAOPS_PRESERVE_OBJECT_NAME flag disables the conversion of the object name to uppercase, instructing SOLE to delete DATABASE_1 and add database_1.

This functionality also disables the object identifier rule verification performed in SOLE. If an unsupported identifier is used for an object such as the Table-3 example above, the APPLY action for the job will fail due to an error in Snowflake.

Using the DATAOPS_PRESERVE_OBJECT_NAME functionality leads to creating objects with special characters and case-sensitive identifiers. Such objects might not work out of the box with all orchestrators and all queries.

Data-type aliases

Snowflake uses aliases for data types such as TEXT for VARCHAR(16777216). Unless mandatory, it is preferable not to use such aliases in the Snowflake configuration because the SOLE Orchestrator will change these data types at runtime. We recommend using the exact data types in the configuration. These data-type or in-place changes are also logged as they occur. For instance, the SOLE Orchestrator will change the TEXT datatype to VARCHAR(16777216) and log this change in the log files. For a complete list of data types and their aliases refer to the Snowflake data type summary.

However, data-type aliases are sometimes necessary. The resource monitor object is a good example of this. The Snowflake Docs suggest using TIMESTAMP for the parameter start_timestamp. But a timestamp leads to an error when creating or updating the resource monitor object.

Why?

Currently, the resource monitor object only supports DATE as a valid value at the time of creation, but the date variable is converted to TIMESTAMP upon successful operation. This leads to SOLE marking the resource monitor with a data-type alias change to replace TIMESTAMP with DATE.

Cross-database object references

As SOLE operates on multiple databases and schemas as part of its lifecycle management, it doesn't set a database or schema as a session default when connecting to Snowflake. As a result, referencing a cross-database object requires specifying the object's fully qualified name.

If the referenced database is managed and environment-controlled by SOLE, the environment-based namespace is resolved by SOLE when referencing the object.

Such cross-database references can be provided in one of three ways:

Object reference by object name

The object reference by object name can be used when the referenced object belongs to the same schema and database.

databases:

DB_1:

schemas:

SCHEMA_1:

pipes:

PIPE_1:

copy_statement:

into:

table: "TABLE_1"

from:

stage: "STAGE_1"

If the prefix is set to DATAOPS and the suffix is set to PROD:

- The fully-qualified name for the table is

DATAOPS_DB_1_PROD.SCHEMA_1.TABLE_1 - The fully-qualified name for the stage is

DATAOPS_DB_1_PROD.SCHEMA_1.STAGE_1

Object reference by object and schema name

This object reference by object and schema name is used when a referenced object belongs in a different schema but is part of the same database.

databases:

DB_1:

schemas:

SCHEMA_1:

pipes:

PIPE_1:

copy_statement:

into:

schema: SCHEMA_2

table: "TABLE_1"

from:

schema: SCHEMA_3

stage: STAGE_1

If the prefix is set to DATAOPS and the suffix is set to PROD:

- The fully-qualified name for the table is

DATAOPS_DB_1_PROD.SCHEMA_2.TABLE_1 - The fully-qualified name for the stage is

DATAOPS_DB_1_PROD.SCHEMA_3.STAGE_1

Object reference by object, schema, and database name

The object reference by object, schema, and database can be used when a referenced object belongs to a different database.

databases:

DB_1:

schemas:

SCHEMA_1:

pipes:

PIPE_1:

copy_statement:

into:

database: DB_2

schema: SCHEMA_2

table: "TABLE_1"

from:

database: DB_3

schema: SCHEMA_3

stage: STAGE_1

Assuming that DB_2 is managed while DB_3 is not and the prefix is set to DATAOPS, and the suffix is set to PROD:

- The fully qualified name for the table is

DATAOPS_DB_2_PROD.SCHEMA_2.TABLE_1 - The fully qualified name for the stage is

DB_3.SCHEMA_3.STAGE_1

You can provide explicit object names even if the referenced object belongs to the same database and schema.

Using the DataOps vault

SOLE retrieves the Snowflake credentials stored in the DataOps Vault. Without these credentials, the SOLE orchestrator cannot access Snowflake to perform the required actions.

By default, the credentials are stored in the following format (map) within the vault:

SNOWFLAKE:

SOLE:

ACCOUNT: <account> # Value set in variable DATAOPS_SOLE_ACCOUNT

USERNAME: <username> # Value set in variable DATAOPS_SOLE_USERNAME

PASSWORD: <password> # Value set in variable DATAOPS_SOLE_PASSWORD

ROLE: <role> # Value set in variable DATAOPS_SOLE_ROLE

These values in these credentials can be overridden if they are present in a different vault path or the DataOps pipeline's default location. In other words, if the DATAOPS_VAULT() function is not used, these credentials are always stored in the default location.

For example:

The value of ACCOUNT is stored in the vault key SNOWFLAKE.PRODUCTION.ACCOUNT, and ROLE is stored in SNOWFLAKE.INGESTION.ROLE. In this scenario, the variable DATAOPS_SOLE_ACCOUNT can be set to DATAOPS_VAULT(SNOWFLAKE.PRODUCTION.ACCOUNT) and DATAOPS_SOLE_ROLE to DATAOPS_VAULT(SNOWFLAKE.INGESTION.ROLE) in the variables section of the job file or the variables.yml file. When the pipeline runs, the Secrets Manager Orchestrator extracts the Snowflake credentials and writes them to the job file or the config.yml file.

variables:

DATAOPS_SOLE_ACCOUNT: DATAOPS_VAULT(SNOWFLAKE.PRODUCTION.ACCOUNT)

DATAOPS_SOLE_ROLE: DATAOPS_VAULT(SNOWFLAKE.INGESTION.ROLE)

To learn how to configure key-pair authentication in with vault, refer to the key-pair vault configuration documentation.